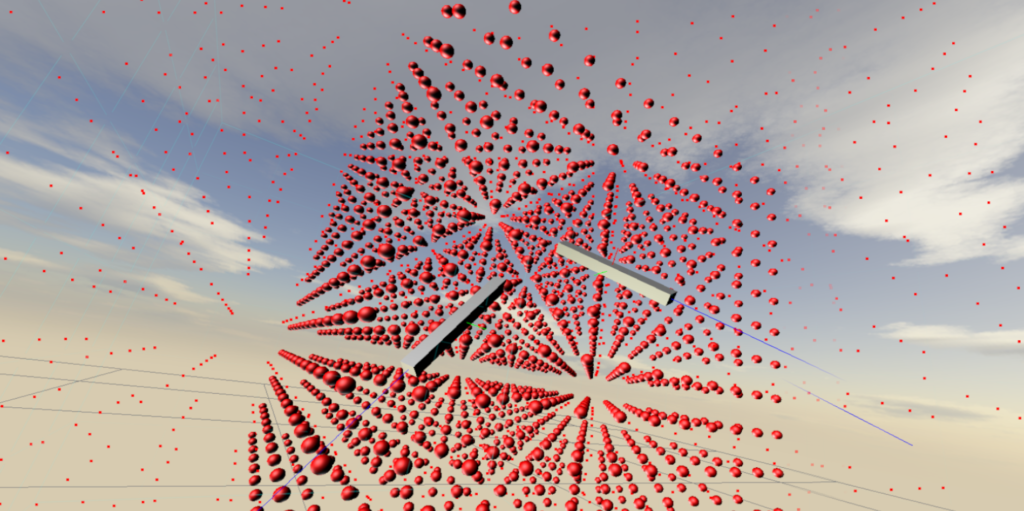

Here is a short article I wrote about my work with Virtual Reality, and collaborations with game designer Johan Warren.

Here is a short article I wrote about my work with Virtual Reality, and collaborations with game designer Johan Warren.

Here is a short video demonstration of the game.

Here is a short article I wrote about my work with Virtual Reality, and collaborations with game designer Johan Warren.

Here is a short article I wrote about my work with Virtual Reality, and collaborations with game designer Johan Warren.

Here is a short video demonstration of the game.

My work in electronic music and instrument building often involves aspects of writing – or hacking apart – computer code, and programming small embedded microprocessors. This kinetic sculpture is the result of experiments in controlling robotic movement and deep learning algorithms in computer vision.

The piece uses a camera coupled to a computer vision algorithm that identifies the most conspicuous, attention grabbing element in the visual field (Itti et al., IEEE PAMI, 1998). Camera input is converted into five parallel streams according to color, intensity, motion, orientation, and flicker, which are visible at the bottom left of the screen. These streams are then weighted and fed into a neural network to generate a saliency map, visible at the right-side of the screen. The robot arm is then programmed to move towards the most salient object, identified by the green ring on the screen on the left

This simple coupling – computer vision and movement – generates eerily life-like behaviors that are often delightfully unpredictable.

below is some documentation of early prototypes

We had a fantastic – and big! – group of students in this year’s Laptop Ensemble. You can visit the class website here for more information, and watch a few videos of rehearsals and the final presentations below.

Dr. Larry Wilen and I co-teach a course in musical acoustics and instrument design in Yale’s Center for Engineering Innovation and Design. Here’s a brief video from a story WNPR produced on the class.

I have been working with a team of undergraduate students to design an interface for music production and sound synthesis using the HP’s immersive computing platform called the Sprout. We have been using the depth camera to identify and segment physical objects in the workspace, and then use the downward-facing projector to map animations onto the surface of the objects. This project is part of the applied research grant, Blended Reality, with is co-funded by HP and Yale ITS.

Documentation and video of my piece for laptop ensemble, performed by Sideband.

Piano improvisation and realtime granular sound file processing. This is a performance in Princeton’s Taplin Auditorium.

I had the opportunity to work with the dancer & choreographer Rebecca Stenn over a two-month period to create this new piece. It is for piano and live electronics and solo dancer, and it incorporates improvisation in both mediums. It was premiered at the Joyce SoHo as part of the SONiC festival, and subsequently performed at the 92nd Street Y.

I had the opportunity to work with the dancer & choreographer Rebecca Stenn over a two-month period to create this new piece. It is for piano and live electronics and solo dancer, and it incorporates improvisation in both mediums. It was premiered at the Joyce SoHo as part of the SONiC festival, and subsequently performed at the 92nd Street Y.